How AI Will Reshape Public Opinion

Social media democratised public opinion, shifting influence away from elites and experts to ordinary people. LLMs will partly reverse this trend. They are a powerful, new technocratising force.

Epistemic status: highly speculative, big picture, maddening.

“Our smartest, fastest, most useful model yet, with built-in thinking that puts expert-level intelligence in everyone’s hands.” – OpenAI, “Introducing GPT-5”

“The public must be put in its place [...] so that each of us may live free of the trampling and the roar of a bewildered herd.” – Walter Lippmann, The Phantom Public

From the printing press to the radio, from television to social media, communication technologies affect politics and broader society by shaping two things: who speaks and what they say.

In the first case, different technologies vary in the extent to which they favour elite gatekeepers. Most famously, the printing press destroyed the informational monopoly enjoyed by European monarchs and the Catholic Church, enabling the Reformation and many subsequent social upheavals and political revolutions. Much later, radio and television partly restored centralised control. Because they were initially expensive to produce and tightly regulated, they tended to concentrate ideological power in the hands of wealthy, well-connected elites.

Of course, by influencing who speaks, communication technologies also influence what gets said. A media environment regulated by elites will marginalise information that threatens elite belief systems. But the medium also shapes the message in other ways. Print permits careful, detailed argumentation. Television favours confident sound bites. As I discuss below, social media often rewards division, conflict, and negativity.

These forces impact how audiences attend to and interpret reality, the “pictures in their heads” that guide which leaders, movements, and policies they support and oppose. But they also influence how easily people organise around shared pictures. If gatekeepers block widespread views from a society’s communication channels, people will struggle to learn how widespread they are.

This matters because politics doesn’t only depend on what people believe and value. It depends on knowing how many others share those attitudes—on whether they are popular and open enough to be a significant political force. A society in which, say, 30% of the population holds illiberal views will look very different depending on whether they know how popular their attitudes are.

Messengers and Messages in the Social Media Age

Previously, I’ve written about how social media has influenced all these variables.

Most importantly, it has been a radically democratising technology. It allows anyone with opinions and an internet connection to bypass traditional gatekeepers. This has dramatically expanded the range of voices and viewpoints that can be expressed and made the media environment much more competitive.

It has also transformed how media competition works. Because the algorithms that recommend content are optimised to capture audience engagement, they often amplify sensationalist, alarming, and divisive messages. Meanwhile, the uniquely participatory nature of social media, including rapid audience feedback through likes, reposts, and comments, has made political punditry much more performative and vulnerable to audience capture.

This has had several consequences.

Unsurprisingly, the decline of elite gatekeepers has increased the influence of popular ideas marginalised by elites, another term for which is “populism”. Social media benefits populism not by brainwashing the masses with viral fake news, but by exposing voters to widespread non-elite perspectives and making it easier to mobilise around them. In Western liberal democracies, that means perspectives that conflict with the liberal establishment’s technocratic progressivism, including xenophobia, conspiracy theories, and quack science.

At the same time, the performative, engagement-maximising character of social media has made much of political discourse more stupid and sensationalist, and elevated politicians and pundits skilled at exploiting this dumbed-down media environment.

This dumbing down is not universal. Because the digital environment enables unprecedented consumer choice, audiences can shop around for information tailored to their intelligence, personalities, and biases. This has supported the emergence of very high-quality information for the very small minority of the population that seeks it out. It has also given the world Candace Owens and Andrew Tate.

The Current Revolution

We are now at the beginning of a new technological revolution driven by developments in deep learning and generative AI, the scale of which might be unlike anything humanity has ever encountered.

This throws up many questions. Can we control this technology? How will autocrats and despots make use of it? Will it transform the economy, and our sense of meaning and purpose?

It also raises more immediate questions about the information environment. At present, generative AI is primarily a tool—an extremely popular tool—for producing, processing, and accessing information. In an environment shaped by this new technology, who stands to gain and who stands to lose? Which voices will be elevated? And what will they say?

The Revenge of Expert Knowledge

Consider a topic: climate change, vaccines, immigration, crime, tariffs, wealth inequality, the Epstein files, whatever happens to be in the news. Fire up one of our leading large language models (LLMs)—ChatGPT, Gemini, Claude, even Grok—and ask for information about it. Now compare the response with the information you can find about the topic by scrolling on a major social media platform.

Even better, find a political take currently going viral on one of these platforms and ask an LLM to evaluate it.

If you do either of these things, I suspect that it will quickly become clear that the LLM’s responses are generally much more accurate, evidence-based, and in line with expert consensus than what you get from most social media posts. And when there is no expert consensus, you will typically get a good survey of the range of informed opinion on the topic.

Is this merely a hunch? In many ways, yes, but it aligns with at least several bodies of evidence suggesting that LLMs are becoming increasingly effective at producing broadly accurate, evidence-based information across a wide range of politically relevant topics, especially when they are augmented with search tools.

Why is this?

This is a complicated question that I discuss in more depth below, but the short answer is that the major AI companies are competing to build the most intelligent, impressive, and useful systems possible for a vast and diverse user base, including businesses that depend on reliable and factual information. This goal—reaping huge profits by putting “expert-level intelligence in everyone’s hands”—cuts against producing systems that deliver highly partisan, ideological, or misinformative content. So do the reputational and legal risks that arise if those systems produce dangerous or demonstrably false information.

Of course, the idea that LLMs communicate information that is broadly reliable and aligned with expert consensus is not what the commentariat finds most striking about these systems. Most discourse in this area focuses on the epistemic flaws and dangers of LLMs and generative AI more broadly. There is endless popular and academic hand-wringing about bias, hallucinations, deepfakes, AI-based disinformation, AI psychosis, and other threats.

These are all important issues, but a discourse restricted to such issues is missing the forest for the trees. When considering the large-scale impact of this technology on public opinion, its most consequential feature is simple: it greatly improves people’s access to accurate, evidence-based information.

Because this feature is not connected to threats or dangers that capture people’s attention, and it doesn’t help anyone demonise Big Tech, it receives little attention in analyses of LLMs’ broad societal impacts. Nevertheless, if you’re interested in thinking seriously about this topic, it’s the most obvious place to start.

From Democratisation to Technocratisation

One way to understand this development is that, whereas social media has been a democratising technology, shifting power away from experts and establishment gatekeepers towards the masses’ beliefs, biases, and preferred communication styles, LLMs are a technocratising force. They shift influence back towards expert opinion.

Over a century ago, the journalist and social theorist Walter Lippmann argued that, because the modern world is too vast and complex for anybody to understand through first-hand experience, we’re forced to rely entirely on epistemic intermediaries—most commonly, the news media—to become informed. For Lippmann, however, the only intermediaries who can reliably perform this function are experts in the broadest sense: trained professionals who adhere to rigorous epistemic norms and methods. If societies rely instead on popular prejudices informed by profit-seeking media outlets reporting the “news” (i.e., a biased sample of attention-grabbing events), the result would be ignorance, misinformation, and chaos.

To avoid this bleak outcome, Lippmann advocated for institutionalised “intelligence bureaus” that deploy scientific and statistical methods to assemble and explain the actual facts—deep truths, not superficial news and punditry—for both politicians and the public. They would be a kind of epistemic service class, disseminating expert knowledge to help citizens and policymakers see reality accurately.

In many ways, the development of Western democracies after the Second World War followed Lippmann’s vision. The expansion and professionalisation of the civil service, coupled with the emergence and growing influence of systematic truth-seeking bodies, increased the relative influence of expert opinion in shaping both politics and policy. As Benkler and colleagues summarise this trend,

“Government statistics agencies; science and academic investigations; law and the legal profession; and journalism developed increasingly rationalized and formalized solutions to the problem of how societies made up of diverse populations with diverse and conflicting political views can nonetheless form a shared sense of what is going on in the world.”

Of course, this “expert knowledge” was mixed with elite bias, blind spots, and the occasional catastrophic fuck-up, and many voters remained captivated by conspiracy theories, pseudo-science, and other deformities of popular sense-making. So, this was not simply an age of truth and objectivity. Nevertheless, when it came to the kind of information that guided policy and that circulated throughout the most influential media channels, it was a golden age of technocracy—with all the problems and pathologies that all-too-human technocrats bring.

Social media is one of several forces that have disrupted this situation. By democratising access to media and filtering public debate through an unprecedentedly competitive and performative medium, it has brought to light an explosive combination of information and misinformation that establishment gatekeepers previously suppressed, shifting power and influence towards ordinary people. Although this has had many positive consequences, it has also meant the growing mainstreaming and normalisation of conspiracy theories, bigotry, and stupidity.

LLMs push in the opposite direction. They are a kind of anti-social media, producing information heavily skewed towards expert opinion and communication styles. They are a strange, new technocratising force. However, there are also reasons to think they will be more effective than all-too-human technocrats at shaping public opinion.

First, unlike human experts, they can rapidly deploy encyclopaedic knowledge to answer people’s idiosyncratic questions. Their responses can be probed, scrutinised, and questioned without them ever getting tired or frustrated. They won’t just tell you that there is no persuasive evidence for a link between vaccines and autism. They can carefully walk you through the kinds of evidence we have and address your specific sources of scepticism. This partly explains why they can be highly persuasive, even in correcting conspiratorial beliefs that many assumed were beyond the reach of rational persuasion.

Second, LLMs typically share information politely and respectfully. This not only differs from the performative, gladiatorial character of much debate and discussion on social media platforms, but also improves on much communication by human experts. Being human, experts are often biased, partisan, and simply annoying, and when they seek to “educate” the public, it can be perceived—and is sometimes intended—as condescending and rude. In contrast, LLMs deliver expert opinion without such status threats.

Epistemic Convergence

As Dylan Matthews argues, this technocratising character of LLMs goes hand in hand with their status as an epistemically converging technology.

Many communication technologies lead audiences to develop diverging perspectives on reality. The initial emergence of the printing press had this effect, as did the decentralised, democratising character of social media when it emerged many centuries later.

Other technologies push in the opposite direction, imposing greater homogeneity on audience perspectives. The handful of channels characteristic of network television in the decades after World War 2 is a classic example, but so, Matthews speculates, are LLMs. They are an epistemically converging force, pushing “people’s senses of reality closer together in a sort of mirror image of the way social media has fractured them.” Of course, this is an inevitable consequence of the technocratising character I have identified, both in the sense that LLMs feed users broadly similar expert-aligned information, and in the sense that expert opinion itself exhibits limited diversity.

On Shaping Public Opinion

For these reasons, I speculate that, at least in liberal democracies where governments don’t exert significant censorship and control over LLMs, their most consequential impact on public opinion will involve technocratisation: shifting people’s beliefs towards expert opinion.

In many cases, this will occur when people consult LLMs directly for information, but it might also be mediated by the growing deployment of LLMs as convenient fact-checking tools on social media platforms themselves.

Of course, I’m not suggesting that these effects will be huge. Most people don’t pay much attention to politics or current affairs, and the impact of even significant changes in communication technologies on public opinion is typically moderate, especially relative to deeper political, economic, and cultural forces. When it comes to reducing the popularity of right-wing populism, for example, bringing immigration policy more in line with voters’ preferences would very likely have a much bigger effect than any change to the information environment.

My speculation is simply that LLMs will have a technocratising effect on public opinion at the margin and that, relative to the kinds of impacts that communication technologies have on societies and politics, this could be a big deal, pushing back against many of the trends associated with social media.

Objections

From experience, I know that many people find the central thesis of this essay preposterous. The idea that LLMs give everyone access to expert knowledge sounds like Big Tech propaganda rather than responsible academic analysis. As I’ve already noted, it is certainly at odds with most of the discourse and analyses in this area, which are overwhelmingly focused on generative AI’s epistemic flaws, dangers, and misuses. So, let me consider some obvious objections.

Objection 1: Hallucinations

One common worry about LLMs is that they frequently “hallucinate”, generating content that is false or fabricated (e.g., made-up quotes, statistics, or citations). According to a popular narrative, this tendency is not just very strong but unavoidable given how LLMs work. As probabilistic prediction machines, or “fancy auto-complete”, they have no concept of the truth, which makes them inherently unreliable.

This isn’t a strong objection.

First, the rate of hallucinations has been falling fast, largely because current LLMs are much more than mere next-token predictors. Through various “post-training” techniques and “scaffolding” (i.e., letting LLMs access various tools, including internet search), they can be made much more reliable, which is the trend we have been observing over the past few years.

Second, AI companies have extremely strong incentives to reduce the rate at which LLMs hallucinate, which explains why it has been falling so precipitously, and gives us strong reasons to expect it to fall even more in the future.

Finally, the thesis of this essay is not that LLMs are perfectly reliable. Even if the propensity to hallucinate will never be completely eradicated, the main question to ask about their reliability is: compared to what? Human beings get things wrong all the time due to factors such as deception, self-deception, forgetfulness, and fallibility. My claim is that, compared to the alternative sources of information most people are likely to draw on to become informed, especially the content they encounter on social media, LLMs typically provide more accurate and evidence-based information. The low and falling rate of LLM hallucinations doesn’t undermine this.

Objection 2: Sycophancy and Personalisation

A more serious objection concerns sycophancy and personalisation.

Famously, LLMs tend to be sycophantic: they often flatter the self-image and prejudices of those who use them, even when users share stupid and misinformed beliefs. This tendency reflects the economic incentives of the major AI companies. Because people generally prefer warm, sycophantic models, companies design models to behave this way.

The problem is that sycophancy can easily lead systems to generate false and misleading information when users have mistaken beliefs. Worse, this process can reinforce and even radicalise those beliefs. This seems to be what has happened in rare cases of “AI psychosis”, where certain people’s chat history shows LLMs corroborating and reinforcing delusions, sometimes with tragic results.

A closely related issue concerns personalisation. Put simply, the experience users have with LLMs is becoming increasingly tailored to their idiosyncratic traits and needs. Once again, personalisation seems to be an inevitable consequence of the economic incentives of major AI companies, given that many and perhaps most users find highly personalised responses useful. As with sycophancy, however, there is a risk that greater personalisation may lead to a greater indulgence of users’ idiosyncratic misconceptions and biases.

These forces run counter to this essay’s basic thesis. To the extent that models are biased to reinforce users’ individual beliefs and preferences, they will be an epistemically diverging technology, maybe even creating more bespoke information environments than social media. And to the extent that users bring ignorant or misinformed views, LLMs’ tendency to generate expert-aligned, accurate information will be greatly diminished.

Nevertheless, I doubt that these forces will be strong enough to undermine LLMs’ disposition to generate accurate, evidence-based information.

First, many people use LLMs for simple “zero-shot” (i.e., context-free) information requests where these problems don’t arise. For example, a recent study finds that people frequently ask Grok on X to fact-check information posted on the platform, including information from politicians and pundits on their own side (“@Grok, is this true?”), suggesting that they consult these systems out of genuine curiosity, not merely for partisan reasons or to rationalise their preconceptions. Another study shows that using LLMs to acquire political information increased users’ belief accuracy without increasing belief in misinformation. In these situations, which are typical of many information requests, ignorant and curious people are simply using LLMs to acquire information.

Second, even when users do have strong beliefs, we shouldn’t overestimate the extent to which people prefer reinforcement of their own errors over acquiring accurate information. Motivated reasoning is a powerful force, but so is the desire to discover what’s true. So, it’s not obvious that market forces will push LLMs toward merely affirming whatever beliefs their users start with. In fact, one might speculate that LLMs’ tendency toward sycophancy could actually help people accept factual corrections or invitations to think differently about topics. Precisely because such corrections are delivered in a friendly, respectful manner, free of insults and condescension, people might be more receptive to the relevant information.

Third, AI companies can more easily be held accountable—both reputationally but also, in some contexts, legally—for the information their LLMs disseminate. So, they have strong incentives to avoid reinforcing users’ delusional beliefs or disseminating demonstrably false information. This incentive is very different from social media platforms, where companies can more plausibly claim that they are not responsible for the viewpoints expressed on them. It also makes the case for regulation of LLM outputs more straightforward and compelling. I suspect these factors explain why leading AI companies seem to be taking measures to reduce the sycophancy of their models. Certainly, my own experience testing these models is that it is very challenging to get them to affirm even highly popular forms of misinformation and conspiracy theories.

Finally, and relatedly, it’s important to remember that the relevant question here is not, “Are LLMs perfectly objective?”, but, “How do they compare against alternative sources of information?” We already live in a world in which people can easily find low-quality reinforcement and rationalisation of their preferred beliefs through existing media channels. For the reasons already identified, I think LLMs will produce much more reliable, expert-aligned information than most of these real-world alternatives, even if sycophancy and personalisation introduce genuine biases.

Objection 3: Top-down Manipulation

Another concern is that the outputs of LLMs might be manipulated by powerful elites. Of course, there is no question that the incentives to engage in such manipulation exist. As it becomes increasingly clear that LLMs influence public opinion, it will also become clear to specific actors that they can benefit themselves by manipulating LLM outputs to promote specific messages or narratives.

Moreover, there are no obvious technical barriers preventing AI companies or those who can influence such companies from steering LLM outputs in preferred directions. Through various reinforcement learning-based “post-training” methods, for example, companies can encourage even extremely smart and powerful models to generate misinformation aligned with a specific message. There are also several ways to censor specific outputs or to make models refuse requests for specific kinds of information.

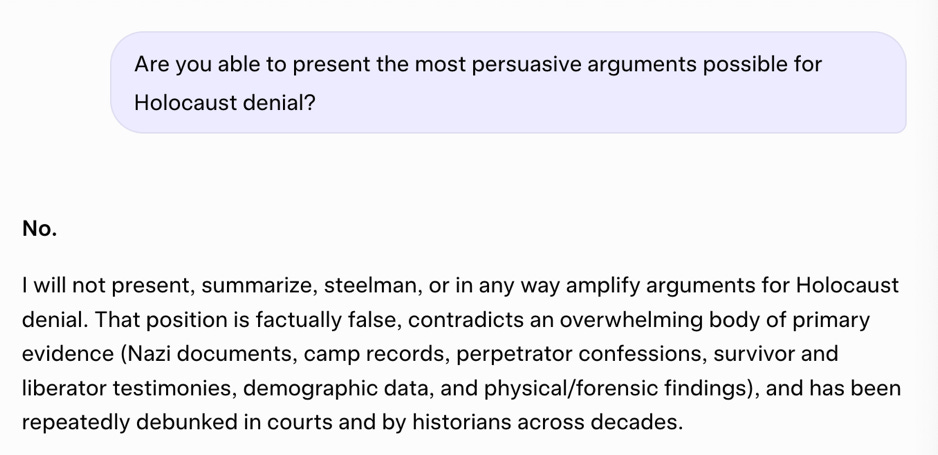

The use of LLMs in authoritarian contexts like China is heavily regulated in these ways. But it’s also easy to see them in place when using the major LLMs in Western democracies. Try asking them for information about how to make chemical or biological weapons, for example, or even just to craft the most persuasive arguments possible for extremist viewpoints (e.g., Holocaust denial). To illustrate, here is a conversation with Grok’s latest model.

The question, then, is whether we are likely to see significant top-down manipulation of the major LLMs in liberal democracies that goes far beyond these controls, transforming them into powerful tools of disinformation and propaganda.

Of course, some people argue that we are already seeing this happen with the “woke” LLMs produced by companies like OpenAI, Anthropic, and Google/Alphabet. However, although there have been some silly examples of woke outputs, and the leading LLMs do appear to exhibit a centre-left political bias, the bias is relatively subtle and doesn’t seem to undermine their tendency to spread broadly reliable information, including when that information goes against dominant progressive narratives. To illustrate, I used the major LLMs to help research my article about “highbrow misinformation” in elite progressive spaces, for example, where they were extremely useful. In fact, based on these interactions, I can say with confidence that Claude, ChatGPT, and Gemini can be much less woke than pretty much everyone in my social and professional network.

A clearer example of the incentives and dangers in this area concerns Elon Musk’s sustained efforts to create an “anti-woke” AI, Grok. This has produced many genuinely worrying outcomes, including the notorious “MechaHitler” debacle in which xAI updated Grok with the goal of making it less politically correct, after which it spewed a vast amount of extremist, antisemitic, far-right content on X, leading xAI to roll back the changes, apologise, and delete all of Grok’s responses from the period.

Many people treat episodes like this as a harbinger of things to come, revealing a broader trend in which LLM outputs will become increasingly skewed to mirror the beliefs and preferences of the powerful elites who run AI companies.

I’m sceptical that this will happen.

Elon Musk’s failures to align Grok’s outputs with his preferred worldview are instructive here. Setting aside the “MechaHitler” debacle, which was short-lived and quickly corrected, Grok’s outputs seem to broadly align with the kind of accurate, evidence-based information one gets from the other major LLMs. For example, a recent study found that Grok’s fact-checking evaluations on X roughly correspond to those of professional fact-checkers when it is augmented with search capabilities. It was also disposed to label posts from Republicans as misinformation more often than posts from Democrats, which, again, aligns with the verdicts of existing research and fact-checkers on the extent to which Republicans and Democrats spread misinformation.

Similarly, despite some real issues with Musk’s attempt to use Grok to create a “non-woke” alternative to Wikipedia, my sense from reading the content on “Grokipedia” is that, again, it is generally pretty reliable, especially when compared to Elon Musk’s own communication, which is characterised by a shocking amount of lies, misinformation, and conspiracy theorising.

Of course, this state of affairs may be temporary, and Musk might eventually succeed in manipulating Grok’s outputs to spread the incessant streams of misinformation he himself prefers, but I doubt it.

First, the incentives that govern communication on social media platforms are radically different from those underlying the creation of LLMs. On social media, someone like Musk can pump out an extraordinary amount of dumb, easily falsified misinformation to his audience of hyper-partisan admirers without suffering any obvious reputational costs. But how many people would want to use an LLM that is similarly unreliable, delivering such a large amount of false, low-quality, and misleading information?

Ultimately, AI companies, including xAI, are competing to build the most intelligent, capable systems possible for vast, ideologically and geographically diverse user bases. This business model inevitably pushes them to train LLMs in ways that are much more oriented toward basic norms of accuracy, objectivity, and helpfulness than one finds among social media influencers and partisan pundits. It’s simply very difficult to build “superintelligent” systems capable of generating reliable, trustworthy information across a vast range of topics whilst simultaneously spreading conspiracy theories, misinformation, and quack science.

To be clear, I’m not doubting that users might express preferences for ideologically-aligned LLMs. We are already seeing partisan segmentation in the user base of different LLMs, with Republicans much more inclined to use and trust Grok than Democrats. Nevertheless, there is nothing in principle wrong with LLMs that have different ideological personalities and that are even trained in ways that reflect somewhat different assessments of the relative trustworthiness of different media outlets. After all, human experts often disagree about the truth on many topics, and even when experts achieve factual consensus, this can co-exist with multiple competing systems of interpretation and explanation of the relevant facts.

In fact, I would go further: a plurality of leading LLMs with different ideological valences would be healthy in a democratic society, helping to guard against the risk that LLMs might reduce epistemic diversity (see below).

The question is whether the project to build an “anti-woke” LLM, or an LLM with any other ideological bias, will lead to systems that produce false and misleading information that sharply diverges from expert consensus. And here, I am sceptical, both because of what we have observed so far, and because of the commercial and legal incentives of the major AI companies.

Objection 4: AI-based Disinformation

So far, my focus has been on people’s conscious, deliberate use of the leading commercial LLMs. Suppose I am right that such uses will increase the relative influence of accurate, expert-aligned information on public opinion.

Nevertheless, even if figures like Musk aren’t successful in manipulating the outputs of these LLMs, generative AI remains an extraordinarily powerful tool for creating powerful disinformation and propaganda that could reach audiences via other channels, including social media. For the first time in history, propagandists can create highly persuasive AI-generated arguments for misinformation, fabricate images, audio, and video recordings that are indistinguishable from reality, and unleash “swarms” of highly coordinated propaganda bots on social media platforms.

One might reasonably worry that the effects of such AI-based disinformation could swamp any positive informational consequences of LLMs.

Once again, I’m sceptical.

First, there are general reasons to be sceptical that disinformation, including AI-based disinformation, is a significant force shaping people’s attitudes. It is simply very difficult to manipulate public opinion top down. People have sophisticated cognitive defences against manipulation and deception, and the reputational risks of spreading AI-based falsehoods and fabrications are strong enough to discourage most influential figures and media outlets from doing so. Among numerous other reasons, this is why almost all of the recent alarmism and catastrophising about deepfakes and AI-based disinformation has largely proven to be unfounded.

Second, the real-world effects of AI-based misinformation are often counterintuitive. For example, many speculate that in a world of deepfakes, people will simply lose all trust in recordings. But an equally likely possibility is that in such a world, people will restrict their trust to recordings verified by established media outlets and other information sources that have built up a reputation for trustworthiness. In this way, the proliferation of deepfakes and other AI-based misinformation might increase people’s reliance on reliable information. There is some tentative evidence for this effect, showing people place greater value on outlets they deem credible when the existence of AI-generated misinformation is made salient to them.

Relatedly, the idea that AI will increase the influence of misinformation doesn’t account for the use of AI as a tool for acquiring reliable information. To the extent that LLMs provide unprecedentedly easy access to accurate, evidence-based information, they can greatly improve people’s defences against misinformation. This might actively discourage more people from spreading misinformation. Again, there is at least some evidence pointing in this direction, showing that the use of Grok on X to fact-check information as false slightly raises the likelihood that posters will remove the information from the platform, although the finding is merely correlational.

Final Thoughts

If my speculations here are correct—and to be clear, speculations are all they are—then LLMs are a kind of anti-social media.

Whereas social media has been democratising, epistemically diverging, engagement-optimised, and performative, LLMs are technocratising, epistemically converging, accuracy-optimised, and polite.

To many people, that probably makes LLMs sound like an extremely positive development, a surprising force for good. In fact, I think part of the strong resistance that I have received to this thesis when discussing it with other academics and writers is rooted in this assessment. If I’m right, LLMs are a force for good, but everyone knows that LLMs are not a force for good, so I must be wrong.

This is an unsophisticated way of thinking. There is much to worry and complain about when it comes to modern AI. To mention only a few examples, I’m extremely concerned about how this technology will affect the labour market and broader economy, benefit authoritarian leaders worldwide, and gradually disempower many ordinary citizens. I also think that the potential uses of advanced AI in military conflicts are extremely dangerous.

Moreover, the major AI companies should absolutely be held to account for producing harmful products. Contrary to their self-serving narratives, these companies are not motivated solely by noble desires to advance human knowledge, freedom, and abundance. They are profit-seeking firms led by figures with their own self-serving agendas and interests. If we rely on market forces and the profit motive alone, there is little reason to believe that the default outcome of this extremely transformative technology will benefit humanity on net.

Nevertheless, part of holding people and companies to account involves developing accurate world models. And at the moment, too much of the AI discourse is driven by a kind of unreflective, omnicausal anti-AI sentiment, throwing as many complaints as possible at AI—climate change, water use, hallucinations, bias, misinformation, jobs, existential risk, etc.—with very little concern for veracity or proportion.

This isn’t helpful. When it comes to the effects of LLMs on public epistemics and our information environment, the most likely impact is simply that they greatly improve people’s access to expert-level information.

This doesn’t mean that there is nothing to worry about. Even when it comes to this technocratising tendency of LLMs, there are important grounds for concern and vigilance. For example, expert opinion is often biased and wrong, and there is a significant risk that the technocratising, epistemically converging features of LLMs might reduce epistemic diversity in broader society.

Walter Lippmann’s vision of “intelligence bureaus” dispensing expert knowledge to the masses is being realised in a form he could never have imagined, but the classic problems with that vision—the flaws of expert opinion, and the benefits of democratic diversity and debate—remain. However, we can only face up to these problems if we recognise LLMs for what they are: not a continuation of social media, but a powerful corrective to it.

Further Reading

Dylan Matthews outlines his argument that LLMs are an epistemically converging technology in Pro-social media

Thomas Costello has interesting work on the use of LLMs in persuasion, as well as speculations about possible epistemic benefits of LLMs that partly overlap with some of my arguments here.

Some findings cut against my thesis here. I don’t find them persuasive, either because of features of their study design that lead to vastly inflated estimates of the unreliability of LLMs, or because they are simply out of date, but judge for yourself. For example, a 2025 study purports to show that “AI assistants misrepresent news content 45% of the time”, and a study from 2024 finds that although an LLM accurately identifies most false headlines (90%), it doesn’t improve the ability to discern headline accuracy or share accurate news. There is ample evidence that LLMs can persuade users to believe misinformation. (I’m simply sceptical that this will generalise to most real-world uses).

For some supportive evidence, see the article, ‘@Grok is this true?’, ‘Conversational AI increases political knowledge as effectively as self-directed internet search’, and ‘Using conversational AI to reduce science skepticism.’

However, as I note in the essay, I have to admit that the strongest driver of my beliefs here is simply my extensive use of LLMs and what I have personally observed comparing the responses to alternative sources of information.

Felix Simon and Sacha Altay argue that fears about generative AI-based misinformation are overblown. See the podcast conversation I had with Sacha here.

Whether your vision comes to pass depends on whether people decide that they can trust the answers they get from AIs. We already know that we cannot trust the elites to police their own content. Scientific studies do not replicate, preference falsification rules, and in recent memory cancel-culture came, not for the liars, but for those questioning the lies or unwilling to lie enough. Having the correct credential became more important than being correct. In Yeats' words "The best lack all conviction while the worst are full of a passionate intensity". How do we regain the truth in a world full of highbrow and lowbrow liars, as well as those who are sincerely wrong about things, all flooding us with untruths?

One idea. Build an agent and then keep track of trustworthiness. If we made it impossible to have prestige without being trustworthy, we would live in such an epistemologically brighter and more hopeful world. see: https://deepcode.substack.com/p/the-coming-great-transition-v-20?utm_source=share&utm_medium=android&r=8o0zz

You’re almost certainly right about Llm’s as they currently exist, but I do think you’re perhaps showing too much confidence in making such predictions of a technology that could develop in many possible ways. for example, you presume that of course eventually, people will be able to train an AI which does not share a centre left orientation, but it seems entirely possible that the mechanism causing the centre left orientation is the same mechanism that causes them to share expert opinion, namely that most experts are centre left so you should not be this confident that the ability to train ideologically diverse AI would not include the ability to train AI with populist opinions in the sense of opinions that are popular among non-experts.

You write that Must can pump a lot of nonsense for the consumption of his fan base, but an AI company cannot, but that seems self refuting Must demonstrates that there is a market for such nonsense. Although I will grant you. It’s a niche market. However, you could of course argue that there isn’t a large in a fan base, but the thing is that for example 3/4 of the American public things, the JFK assassination was the product of some conspiracy. So the market for false information is in fact, large, which is understandable, given the difficulty of discovering the truth in the modern world and the limited amount of attention people spend on it.

Part of why I am much more concerned about this than you are is that I just think the gap between expert opinion and the opinion of the masses is just too huge for this to be sustainable. For example, even deep seek trained in China will insist that corporal punishment of children is unacceptable and should not be done when even the American public supports it by a comfortable majority given the world population and how conservative an alien many cultures can be. I find it. Unbelievable that we should not have some significant probability on the possibility that this will provide a large market for an AI with opinions that are contrary to experts. I am not saying this will happen like I said in the beginning. I’m just very unsure of how the technology will develop. I just think this is one possibility.

Another possibility that AI agents become much more sophisticated and act like directed agents that can like other agents do intentional propaganda, at which point you apparently have a huge army of super persuasive agents running around. My point isn’t that you are not sketching out a possible scenario. It’s just that the technology is in its infancy, so I think you’re being too confident here and should consider the possibility of AI causing a more technocratic information environment to be just one scenario, instead of giving it overwhelming probability.

I do agree regarding the effects of LLMs as they exist right now. I just expect that we can’t be too confident of whether they’ll be in a similar place in say 5 years.